Does AI Just Suck?

Above, on the left, is an image of the late actor John Candy created by the unfathomably complicated AI image generator Midjourney, which no doubt based this image on thousands of pictures of its subject. On the right is a cartoon version of John Candy made by some animator, probably based on a handful of still photographs of him. Here is John Candy.

At a glance, which looks more like John Candy? The one generated by the immensely expensive, impossibly complex “artificial intelligence”? Or the one that some no-doubt underpaid Japanese animator in the 1980s scratched out as part of a far larger project? I can predict the objections - if you want to say that the image on the right is more abstracted than the one on the left, and thus it’s easier for your brain to resolve that into John Candy’s likeness, fine. But that still doesn’t change the fact that a human illustrator can render a vastly more convincing likeness than a hideously expensive machine learning system. That guy just looks nothing like John Candy. The set it’s drawn from has other terrible attempts. Here’s “Goldie Hawn”:

Here’s Goldie Hawn:

What an awful, awful effort. “Goldie Hawn” is not code for “an aggregation of the features of a generic blonde.” There’s thousands and thousands of extant photographs of Goldie Hawn or most any celebrity out there, so that’s no excuse. And you’d think that, among the various tasks you might charge an AI image generator with, recreating faces that have been photographed many thousands of times would be one of the easiest. What just drives me mental about this stuff is that tons of people insist on pretending that these technologies work as intended! In the thread where these images appear, there’s plenty of people who point out that they look nothing like their human counterparts, but also people going “Wow! Amazing!” That’s true of so much of AI-generated art; it feels like people have been told so relentlessly by the media that what we are choosing to call artificial intelligence is currently, right now, already amazing that they feel compelled to go along with it. But this isn’t amazing. It’s a demonstration of the profound limitations of these systems that people are choosing to see as a representation of their strengths.

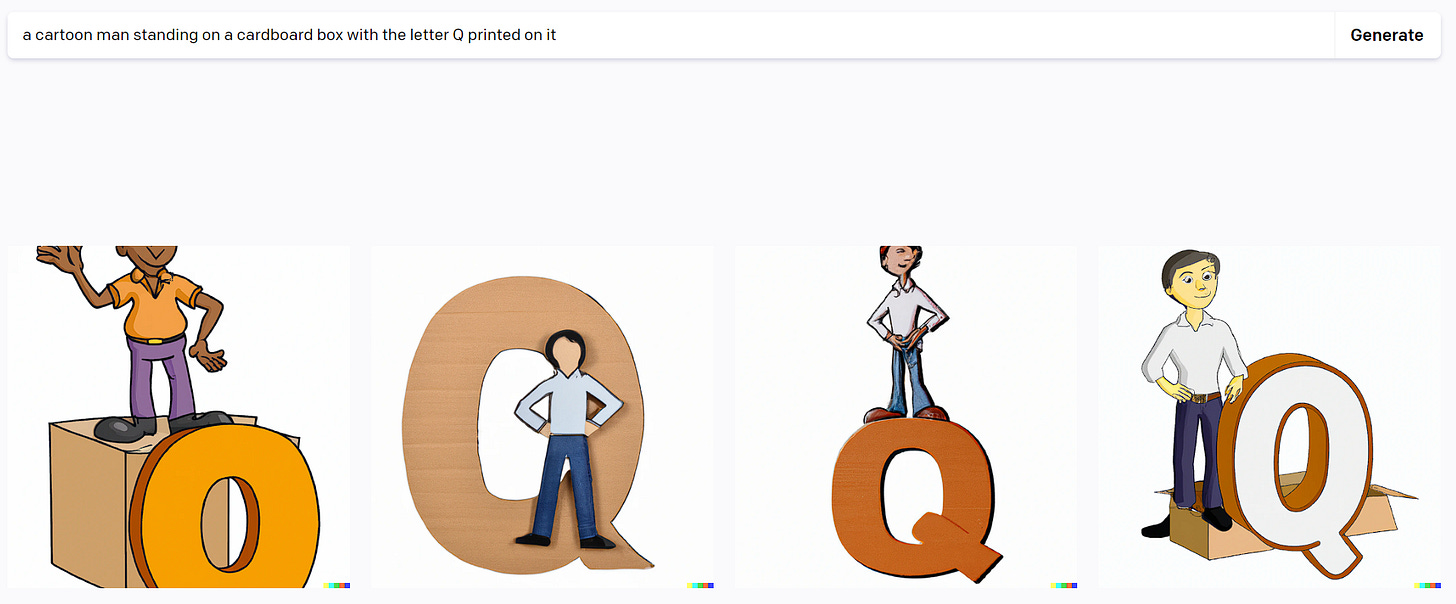

Here’s competitor Dall-E.

Obviously, none of the above satisfies the basic requirements of the very straightforward prompt. This is my experience again and again with these image generators: it’s not just that they’re typically artistically uninspired, failing to meet my subjective aesthetic standards. It’s that they so often fail to understand the most basic concepts of their prompts, demonstrating that there is no coherent internal logic to how they parse those prompts but instead an uninspiring network of proximal relations. I’ve tried similar things before and the system pretty much always has this weird associative approach - if I ask for a cat sitting next to a sign with a ? on it, there’s cats, there’s ?s, there are signs, but there are no cats sitting next to signs with ?s on them. This gets back to the point that people often reject angrily, that the human brain is in fact not an association network generator like these systems but rather has certain rule-bound processes genetically encoded into it, most prominently the language instinct.

My younger brother asked the Bing AI to compare the careers of Lebron James and Kirk Hinrich, who was a college star for Kansas and had a solid career as a point guard for the Chicago Bulls. One snippet:

LeBron James played for one year at the University of Akron before being drafted into the NBA in 2003. Kirk Hinrich played for four years at the University of Kansas and helped Kansas to consecutive Final Fours in 2002 and 2003. While both players had successful NCAA careers, it's difficult to compare them directly because they played in different eras and under different circumstances.

Lebron James, of course, never played college basketball at all. He and Kirk Hinrich were both drafted in the 2003 NBA draft, rendering the “different eras and circumstances” claim nonsensical. Note that this claim is a powerful example of why the frequent “sure they get facts wrong, but they’re reasoning correctly” defense doesn’t work: you can’t reason effectively from incorrect facts. Facts and reason are not extricable from each other. I’ve been telling people for a couple decades that the attitude of “kids these days don’t need to learn facts because they have Google” is fundamentally flawed, as learning facts is an indispensable part of creating the mental connections in your brain that drive reasoning. This feels like a similar problem. ChatGPT, of course, has the same sort of issues that are just as deep and are apparently getting worse.

As I will go on saying, all of this would be much lower stakes and less aggravating if people had the slightest impulse toward restraint and perspective. But our media continues its white-knuckled dedication to speaking about AI in only the most absurdly effusive terms, terms that threaten to exceed the power of language. Here’s Ian Bremmer and Mustafa Suleyman in Foreign Policy: “Its arrival marks a Big Bang moment, the beginning of a world-changing technological revolution that will remake politics, economies, and societies.” They compared some interesting machine learning systems to the Big Bang, the literal creation of the universe. Where does this shit end? And what exactly can these systems actually do, right now, in the world of atoms? Besides stranding a bunch of kids for hours with terrible bus routes, I mean. What is their revolutionary reality, rather than their theoretical revolutionary potential?

I’ve written a bunch about how likely it is that this AI revolution is going to go up in smoke, such as here or here. In these pieces I’ve tended to focus on the broader philosophical questions and the terrible record of humanity’s efforts to predict the future. Typically I’ve left the actual generative powers of these existing systems alone. But what if this software just sucks? What if we’re all so desperate to move to the next era of human history that we talked ourselves into the idea that not-very-impressive predictive text and image compilers are The Future?

![100+] Goldie Hawn Wallpapers | Wallpapers.com 100+] Goldie Hawn Wallpapers | Wallpapers.com](https://substackcdn.com/image/fetch/$s_!BT1k!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F0ae431f1-4998-4abf-8d8e-7c9ffaba84cb_1584x1600.jpeg)

I would have thought that quoting that Foreign Policy piece would prevent people from saying "nobody is going overboard with this stuff" but alas

I hope your last paragraph is right. I'm really sick of living in a world seemingly dominated by the anti-human obsessions of tech evangelists and I would love for one of their attempts to remake civilization to fail in a very public, very noticable way.